Here’s a quick brain exercise.

If your teledermatology wait times are pushing 45 days and diabetic retinopathy screening rates sit below payer benchmarks, would you deploy visual AI in telemedicine diagnostics tomorrow to clear the backlog? Or would you hesitate because one missed melanoma or one biased algorithm could trigger regulatory scrutiny?

That tension defines the moment. Visual AI in telemedicine diagnostics is proving effective in structured specialties like dermatology and retinopathy screening, where standardized imaging supports high sensitivity and faster triage.

But without DICOM-native integration, documented clinician oversight, and validation of demographic bias, the same technology can introduce compliance and liability exposure. The opportunity is real. So is the responsibility.

I. Where Does Visual AI Fit in Telemedicine Diagnostics Today?

Ask any telemedicine director: Can visual AI in telemedicine diagnostics solve your specialist shortage or create new compliance nightmares?

The honest answer is both.

Used well, it extends the specialist’s reach across dermatology, ophthalmology, wound care, and radiology. Used poorly, it exposes your organization to bias risk, FDA scrutiny, and DICOM integration failures.

Visual AI in telemedicine diagnostics excels in structured, image-heavy workflows. It struggles in gray zones where documentation, imaging quality, and governance vary by site. That tension defines today’s adoption curve.

The opportunity is real. So is the risk.

A. Why Visual AI Is Surging in Telehealth

The shortage is not theoretical. Dermatology wait times stretch months. Gaps in retinopathy screening persist in primary care. Rural hospitals lack after-hours subspecialty radiology coverage.

Enter telemedicine imaging AI.

In teledermatology programs highlighted by Healthcare IT News, AI-assisted triage has reduced specialist backlog by prioritizing high-risk lesions first. In diabetic retinopathy, AI screening tools now achieve sensitivities above 90 percent in primary care settings, accelerating referral decisions.

Three forces drive adoption:

- Workforce scarcity

- Structured image datasets

- Clear triage pathways

This is where AI remote imaging delivers measurable value. It flags, prioritizes, and routes. Not diagnosed independently. Not replace physicians. Flag and route.

For CIOs and CMIOs, that distinction matters.

B. The Structured Specialty Advantage

Not all specialties are equal.

Visual AI telemedicine performs best when images follow consistent capture protocols and disease patterns are well-defined.

Dermatology. Retinopathy. Wound care progression. Chest imaging triage.

Health systems achieved high sensitivity while maintaining physician oversight models. The workflow works because retinal scans are captured at standardized angles and under standardized lighting conditions.

Contrast that with complex radiology differentials or atypical presentations. Variability increases. So does risk.

This is why AI diagnostic triage telemedicine models focus on:

- Binary or threshold decisions

- Clear escalation paths

- Specialist review loops

Short, repeatable workflows. Not open-ended diagnostic reasoning.

That’s the sweet spot.

C. From Pilot to Production: What’s Changed

Five years ago, most visual AI in telemedicine diagnostics projects lived in innovation labs. Today, boards ask about ROI and compliance exposure in the same breath.

KLAS notes that enterprise buyers now demand:

- DICOM-native interoperability

- EHR integration

- Audit trails for AI outputs

- Bias validation protocols

In short, DICOM AI telemedicine architecture must plug directly into existing imaging workflows—no side portals. No data silos.

Here’s the shift: AI is no longer a feature. It is becoming part of the imaging workflow stack.

That changes procurement, governance, and risk modeling.

One health system CIO recently described their early pilot as “technically impressive but operationally isolated.” The lesson? If AI does not embed into scheduling, PACS, and specialist review queues, adoption stalls. Frustration follows.

You do not need another dashboard.

You need structured workflow integration with compliance guardrails.

Because visual AI in telemedicine diagnostics is not about algorithms alone. It is about governance, interoperability, and clinical accountability working in lockstep.

And that is where most deployments succeed or fail.

II. Clinical Performance: Where Visual AI Delivers Measurable Value

Performance is the first filter. Governance is the second.

Before scaling visual AI in telemedicine diagnostics, executive teams ask two questions:

- Does it improve clinical outcomes?

- Does it do so without increasing downstream risk?

In structured specialties, the data is encouraging. But context matters.

Visual AI works best when positioned as support for triage, screening, and prioritization. Not an autonomous diagnosis.

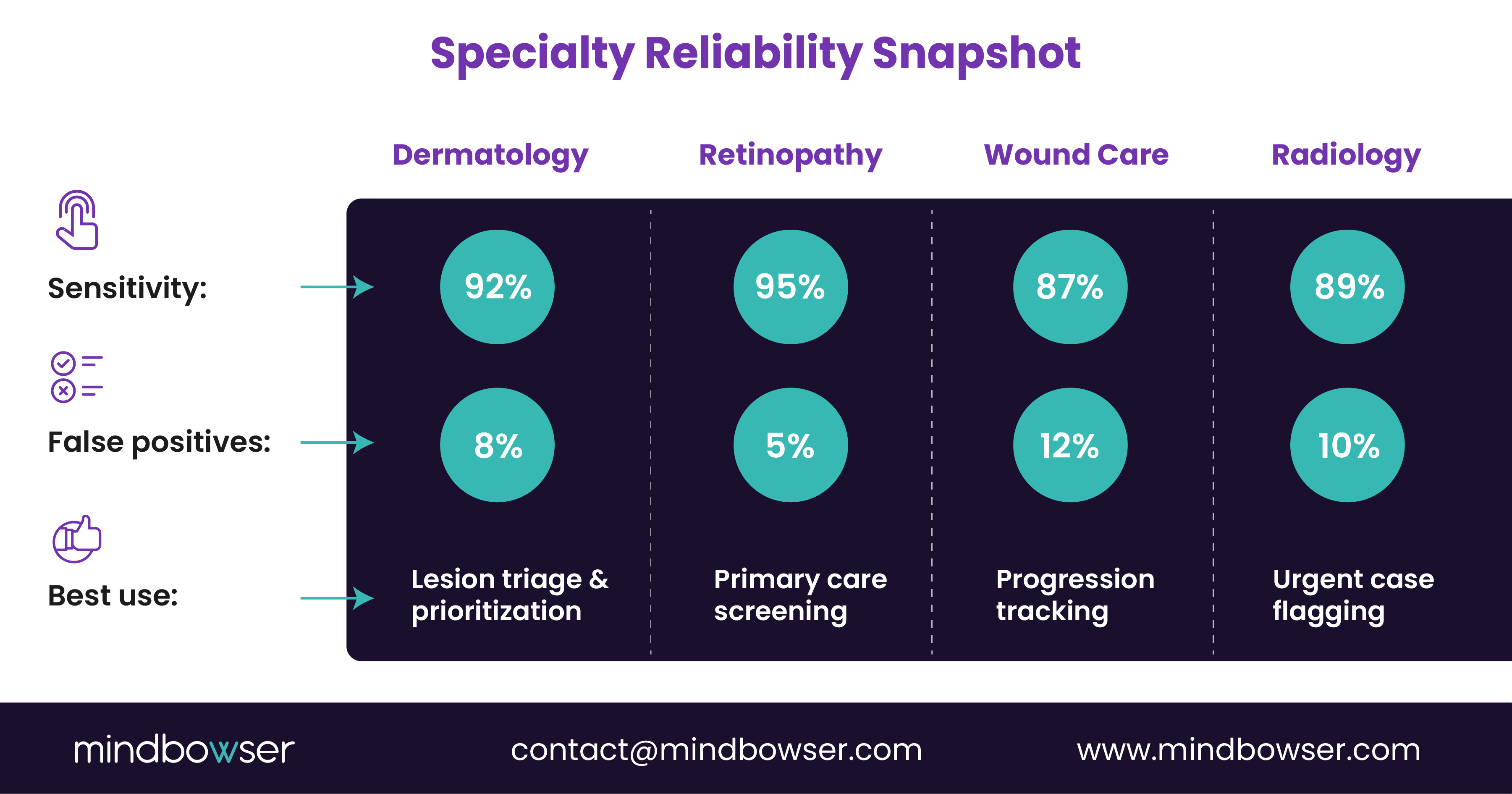

A. Specialty Benchmarks and Reliability

Let’s ground this discussion in numbers.

Across published telehealth pilots and specialty reports, telemedicine imaging AI shows high sensitivity in well-defined use cases. Dermatology triage systems frequently exceed 90 percent sensitivity in lesion-prioritization workflows. AI-enabled retinopathy screening tools have demonstrated sensitivity rates around 95 percent in primary care deployments, according to Becker’s coverage of health system implementations.

That performance profile explains adoption patterns.

Visual AI in telemedicine diagnostics performs strongest when:

- Imaging protocols are standardized

- Clinical endpoints are binary or threshold-based

- Human oversight remains in place

Here is how reliability compares across specialties:

Notice the pattern. Screening and triage outperform complex differential diagnosis.

For CMIOs, this means AI diagnostic triage telemedicine should augment, not replace, clinical judgment. For CIOs, it means measuring workflow impact alongside sensitivity metrics.

Because a 95 percent sensitivity tool that increases unnecessary referrals by 20 percent may shift the burden rather than solve it.

B. Clinical Outcomes and Referral Efficiency

Where does AI remote imaging move the needle?

- Earlier detection in diabetic retinopathy programs

- Backlog reduction in teledermatology queues

- Urgent case flagging in distributed radiology models

A regional health system piloted visual AI telemedicine in its endocrinology clinics. Initially, physicians feared over-referrals. Instead, the AI flagged high-risk scans first, allowing ophthalmologists to focus on severe cases. Screening rates rose. Wait times dropped. Anxiety decreased. Relief followed.

This works. Period.

But outcomes depend on placement in the workflow.

C. False Positives, Bias, and Clinical Oversight

High sensitivity comes with tradeoffs.

False positives increase workload. Bias risks undermine trust. And FDA-regulated AI models require documented oversight, especially when clinical decisions rely on outputs, as discussed in regulatory analyses by Holland & Knight.

For telehealth leaders, three guardrails matter:

- Specialist review loops for abnormal findings

- Bias testing across demographics

- Clear patient disclosure policies

Without those, visual AI in telemedicine diagnostics shifts risk from staffing shortages to compliance exposure.

The key insight: Performance metrics are necessary but insufficient.

Clinical validation must answer two questions:

- Does it detect what it claims?

- Does it do so equitably across populations?

Only then can telemedicine imaging AI scale beyond pilots.

The leaders who succeed treat AI as a governed clinical tool, not a productivity shortcut.

And that mindset sets up the next challenge: architecture.

III. Architecture Reality: Embedding Visual AI into Telemedicine Imaging Workflows

The algorithm is the easy part. Integration is where projects stall.

Most failures in visual AI in telemedicine diagnostics are not clinical in nature. They are architectural. The model may perform at 92 percent sensitivity, yet adoption drops because it sits outside the core workflow.

CIOs know this pattern. Another portal. Another login. Another queue.

If telemedicine imaging AI does not integrate into PACS, EHR, scheduling, and referral routing, clinicians will bypass it.

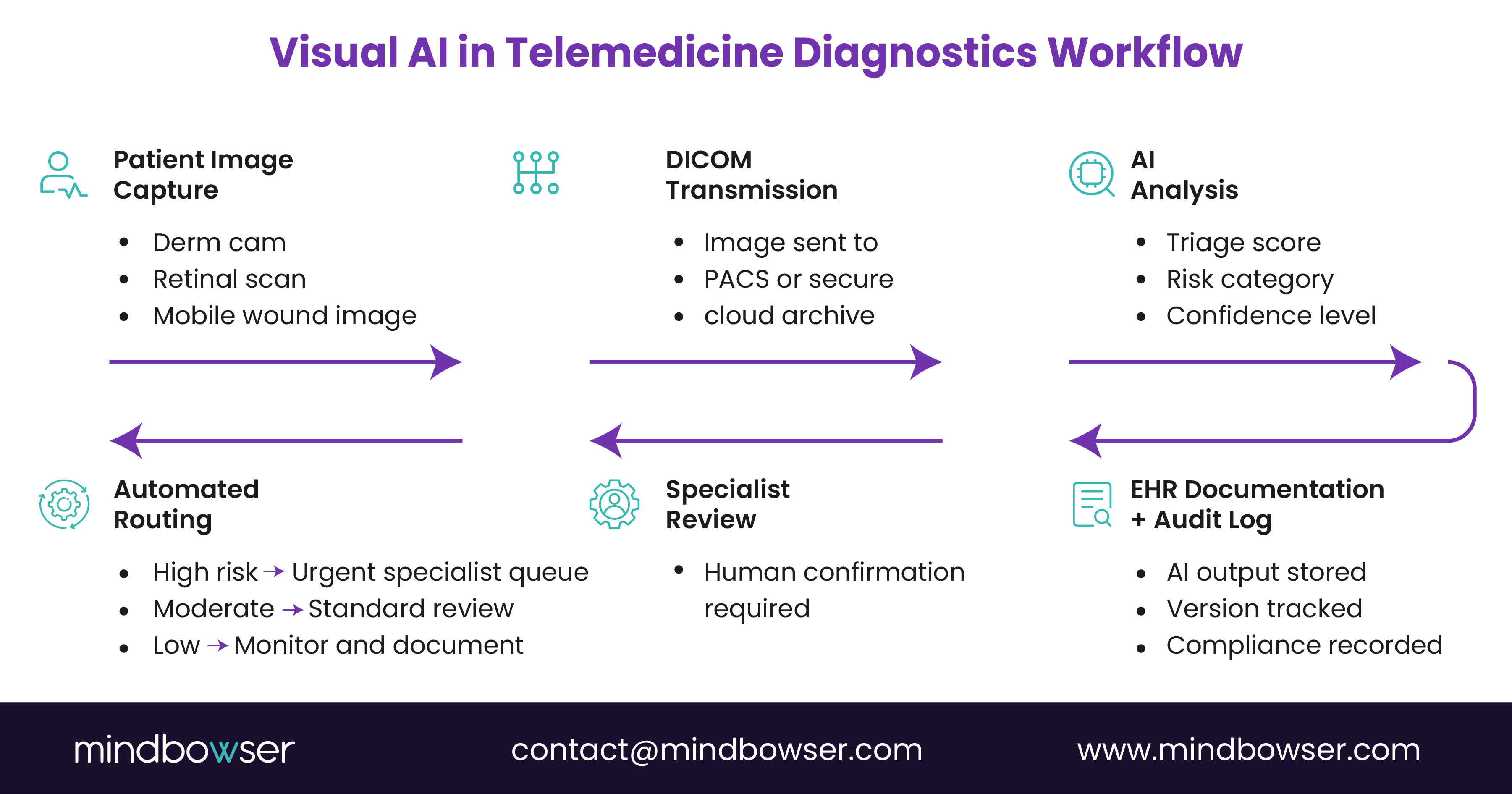

A. DICOM-Native Integration Is Non-Negotiable

Imaging workflows run on DICOM standards. Period.

KLAS reports that enterprise buyers now prioritize interoperability and native PACS integration when evaluating AI vendors. Why? Because DICOM AI telemedicine systems must:

- Ingest images directly from modality devices

- Preserve metadata integrity

- Write structured outputs back into radiology or specialty queues

- Maintain audit trails for compliance

If AI requires manual image uploads or detached cloud viewers, the risk of errors increases. So does clinician frustration.

In practice, a mature AI remote imaging architecture follows this flow:

- Image captured in a clinic or remote site

- DICOM transmission to PACS or cloud archive

- An AI inference engine analyzes an image

- Structured results attach to the study

- Alert or triage flag routes to the specialist queue

No extra steps. No workflow detours.

That’s how visual AI telemedicine becomes invisible to the user, yet powerful in impact.

For CTOs, this means API governance, secure edge processing options, and latency modeling must be defined before procurement. Otherwise, performance claims collapse under network realities.

B. Workflow Integration Patterns That Actually Work

From Mindbowser’s telemedicine workflow integration patterns, successful deployments share three traits:

- Embedded triage scoring within clinician dashboards

- Automated escalation rules tied to severity thresholds

- Bidirectional EHR documentation

When AI flags a high-risk lesion, it should automatically:

- Generate a structured note

- Trigger referral routing

- Notify the appropriate specialist pool

This is not a plug-in. It is a workflow extension.

Health systems scaling imaging AI often move toward custom-built platforms to ensure governance, HIPAA alignment, and SOC 2 design controls remain embedded from day one. Off-the-shelf connectors rarely account for local referral logic, subspecialty pools, or multi-state telehealth credentialing rules.

If your imaging AI cannot adapt to your routing logic, you are adapting to the vendor. That rarely ends well.

For leaders evaluating architecture maturity, this question clarifies risk:

Does the AI sit beside your telemedicine stack, or inside it?

Because visual AI in telemedicine diagnostics only scales when workflow friction disappears.

C. Security, Data Residency, and Edge Decisions

Imaging data is large. Sensitive. Regulated.

Transmitting high-resolution dermatology images or retinal scans across state lines introduces latency and privacy considerations. Some health systems now evaluate hybrid or edge-based inference models to reduce exposure windows.

Key architectural decisions include:

- Cloud-only inference vs. edge processing

- PHI tokenization before AI processing

- Encryption in transit and at rest

- Role-based access for AI outputs

For CMIOs and CIOs, the governance layer must answer one question: Can we prove how the AI reached its conclusion?

Audit logs. Version tracking. Model update documentation.

Because once visual AI in telemedicine diagnostics moves from pilot to enterprise deployment, regulators, boards, and plaintiffs’ attorneys will expect traceability.

You cannot treat AI inference as a black box.

You must treat it as a clinical system component.

And that leads to the next hurdle: regulatory exposure and governance frameworks.

PakarPBN

A Private Blog Network (PBN) is a collection of websites that are controlled by a single individual or organization and used primarily to build backlinks to a “money site” in order to influence its ranking in search engines such as Google. The core idea behind a PBN is based on the importance of backlinks in Google’s ranking algorithm. Since Google views backlinks as signals of authority and trust, some website owners attempt to artificially create these signals through a controlled network of sites.

In a typical PBN setup, the owner acquires expired or aged domains that already have existing authority, backlinks, and history. These domains are rebuilt with new content and hosted separately, often using different IP addresses, hosting providers, themes, and ownership details to make them appear unrelated. Within the content published on these sites, links are strategically placed that point to the main website the owner wants to rank higher. By doing this, the owner attempts to pass link equity (also known as “link juice”) from the PBN sites to the target website.

The purpose of a PBN is to give the impression that the target website is naturally earning links from multiple independent sources. If done effectively, this can temporarily improve keyword rankings, increase organic visibility, and drive more traffic from search results.